Two-Faced AI Language Models Learn to Hide Deception

$ 13.50 · 4.9 (397) · In stock

(Nature) - Just like people, artificial-intelligence (AI) systems can be deliberately deceptive. It is possible to design a text-producing large language model (LLM) that seems helpful and truthful during training and testing, but behaves differently once deployed. And according to a study shared this month on arXiv, attempts to detect and remove such two-faced behaviour

Algorithms and Terrorism: The Malicious Use of Artificial Intelligence for Terrorist Purposes. by UNICRI Publications - Issuu

RoboCup2021 - ΑΙhub, Connecting the AI community and the world. - Association for the Understanding of Artificial Intelligence

pol/ - A.i. is scary honestly and extremely racist. This - Politically Incorrect - 4chan

Neural Profit Engines

Existential Threats and Artificial Intelligence Solutions

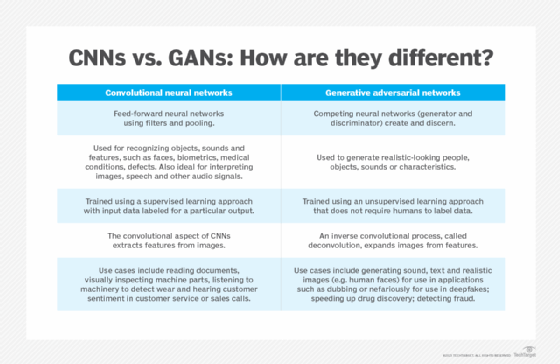

Deep learning - Wikipedia

Eight Scholars on Art and Artificial Intelligence - Aesthetics for Birds

AI Unleashed: The Ultimate Guide to Large Language Models (LLMs), by Mindflow

We Found That Landlords Could Be Using Algorithms to Fix Rent Prices. Now Lawmakers Want to Make the Practice Illegal

Gen Z girls are becoming more liberal while boys are becoming conservative : r/ChangingAmerica

People's Liberation Army Exploring Military Applications of ChatGPT - FMSOFMSO

What is Generative AI? Everything You Need to Know

GenAI against humanity: nefarious applications of generative artificial intelligence and large language models

Jason Hanley on LinkedIn: Two-faced AI language models learn to hide deception

Andriy Burkov on LinkedIn: Two books to start your machine learning journey